Below are links to questions I have contributed to on StackOverflow. If you have time or any of the questions interest you take a moment to review the question and answers. If you feel one of the answers appropriately answer the question, then please vote that answer up to better serve the community and future users looking for an answer to a similar question.

Accessing Salesforce Reports and Dashboards REST API Using C#

Introduction

If you have read any of my other posts, you know I have been doing work with the Salesforce REST API. I recently had a need to access the Salesforce Reports and Dashboards REST API using C#. While spiking out a simple example to access the Reports and Dashboards REST API I did not come across very much documentation on how to accomplish this. In this post I will walk through a quick spike on how to authenticate with the api and how to call it to get a report. Full code sample can be found Here on GitHub.

Prerequisites

- Salesforce Organization – Sign Up Here

- A Salesforce Connected App

- Visual Studio. Visual Studio Community 2015

Visual Studio

With any access to a Salesforce API you will need a user account (username, password, token) and the consumer key/secret combination from the custom connected app. With these pieces of information, we can begin by creating a simple console application to spike out access to the reports and dashboards api. Next we need to install the following nuget packages:

Once these packages are installed we can utilize them to create a function to access the Salesforce reports and dashboards api.

var sf_client = new Salesforce.Common.AuthenticationClient(); sf_client.ApiVersion = "v34.0"; await sf_client.UsernamePasswordAsync(consumerKey, consumerSecret, username, password + usertoken, url);

Here we are taking advantage of some of the common utilities in the DeveloperForce package to create an authclient which will get us our access token from the Salesforce api. We will need that token next to start making requests to the api. Unfortunately, the DeveloperForce library does not have the ability to call the reports and dashboards api, we are just using it here easily get the access token. This all could be done using RestSharp but its simpler to utilize what has already been built.

string reportUrl = "/services/data/" + sf_client.ApiVersion + "/analytics/reports/" + reportId;

var client = new RestSharp.RestClient(sf_client.InstanceUrl);

var request = new RestSharp.RestRequest(reportUrl, RestSharp.Method.GET);

request.AddHeader("Authorization", "Bearer " + sf_client.AccessToken);

var restResponse = client.Execute(request);

var reportData = restResponse.Content;

Since we have used the DeveloperForce package to setup the authentication we can now use RestSharp and the access token to query the report api. In the code above we setup a RestSharp client with the Salesforce url, followed by defining the actual request for the report we want to execute. To make the request we also need to push the Salesforce access token onto the header and now we can make the request to receive the report data.

Conclusion

As described this is a pretty simple example on how to accomplish authentication and requesting a report from the Salesforce reports and dashboards rest api using c#. Hopefully this can be a jumping off point for accessing this data. The one major limitation for me is the api only returns 2,000 records, this is especially frustrating if your Salesforce org has a lot of data. In the near future I will be writing a companion post on how to get around this limitation.

Error 40197 Error Code 4815 Bulk Insert into Azure SQL Database

I have been working on a large scale ETL project, one of the data sources I regularly pull data from is Salesforce. If anyone has worked with Salesforce they know it can be a free for all with object field changes. This alone makes pulling data regularly from the Salesforce REST API difficult since there can be so much activity with the objects. Not to mention the fact that the number of custom fields that can be added to a single object ranges into the hundreds. With these factors in play it can be a daunting task to debug errors when they inevitably crop up.

During a recent run of my process I started to receive the following error:

Error 40197 The service has encountered an error processing your request. Please try again. Error code 4815. A severe error occurred on the current command. The results, if any, should be discarded.

Here is a list of error codes however it was not too helpful in my situation: SQL error codes

In my ETL process I am using Entity Framework and EntityFramework.BulkInsert-ef6, so I am doing bulk inserts into my Azure SQL Database. Since I know there is a good chance there was a change with the object definition in Salesforce that could be the cause of this error that is where I started to investigate. As it turns out one of the fields length was changed from 40 to 60, which means the original table I have created with a column size varchar(40) is going to have a problem. In my case this error happened when the amount of data was larger then the field size definition in the table. Hopefully this post will give someone else another troubleshooting avenue for this error.

Upgrading to Microsoft.Azure.Management.DataLake.Store 0.10.1-preview to Access Azure Data Lake Store Using C#

Introduction

Microsoft recently released a new nuget package to programmatically access the Azure Data Lake Store. In a previous post Accessing Azure Data Lake Store from an Azure Data Factory Custom .Net Activity I am utilizing Microsoft.Azure.Management.DataLake.StoreFileSystem 0.9.6-preview to programmatically access the data lake using C#. In this post I will go through what needs to be changed with my previous code to upgrade to the new nuget package. I will also include a new version of the DataLakeHelper class which uses the updated sdk.

Upgrade Path

Since I already have a sample project utilizing the older sdk (Microsoft.Azure.Management.DataLake.StoreFileSystem 0.9.6-preview), I will use that as an example on what needs to be modified to use the updated nuget package (Microsoft.Azure.Management.DataLake.Store 0.10.1-preview).

The first step is to remove all packages which supported the obsolete sdk. Here is the list of all packages that can be removed:

- Hyak.Common

- Microsoft.Azure.Common

- Microsoft.Azure.Common.Dependencies

- Microsoft.Azure.Management.DataLake.StoreFileSystem

- Microsoft.Bcl

- Microsoft.Bcl.Async

- Microsoft.Bcl.Build

- Microsoft.Net.Http

All of these dependencies are needed when using the DataLake.StoreFileSystem package. In my previous sample I am also using Microsoft.Azure.Management.DataFactories in order to create a custom activity for Azure Data Factory, unfortunately this package has a dependency on all of the above packages as well. Please be careful removing these packages as your own applications might have other dependencies on those listed above. In order to show that these packages are no longer needed my new sample project is just a simple console application using the modified DataLakeHelper class, which can be found here on github.

Now let’s go through the few changes that need to be made to the DataLakeHelper class in order to use the new nuget package. The following functions from the original DataLakeHelper class will need to be modified:

create_adls_client() execute_create(string path, MemoryStream ms) execute_append(string path, MemoryStream ms)

Here is the original code for create_adls_client():

private void create_adls_client()

{

var authenticationContext = new AuthenticationContext($"https://login.windows.net/{tenant_id}");

var credential = new ClientCredential(clientId: client_id, clientSecret: client_key);

var result = authenticationContext.AcquireToken(resource: "https://management.core.windows.net/", clientCredential: credential);

if (result == null)

{

throw new InvalidOperationException("Failed to obtain the JWT token");

}

string token = result.AccessToken;

var _credentials = new TokenCloudCredentials(subscription_id, token);

inner_client = new DataLakeStoreFileSystemManagementClient(_credentials);

}

In order to upgrade to the new sdk, there are 2 changes that need to be made.

- The DataLakeStoreFileSystemManagementClient requires a ServiceClientCredentials object

- You must set the azure subscription id on the newly created client

The last 2 lines should now look like this:

var _credentials = new TokenCredentials(token); inner_client = new DataLakeStoreFileSystemManagementClient(_credentials); inner_client.SubscriptionId = subscription_id;

Now that we can successfully authenticate again with the Azure Data Lake Store, the next change is to the create and append methods.

Here is the original code for execute_create(string path, MemoryStream ms) and execute_append(string path, MemoryStream ms):

private AzureOperationResponse execute_create(string path, MemoryStream ms)

{

var beginCreateResponse = inner_client.FileSystem.BeginCreate(path, adls_account_name, new FileCreateParameters());

var createResponse = inner_client.FileSystem.Create(beginCreateResponse.Location, ms);

Console.WriteLine("File Created");

return createResponse;

}

private AzureOperationResponse execute_append(string path, MemoryStream ms)

{

var beginAppendResponse = inner_client.FileSystem.BeginAppend(path, adls_account_name, null);

var appendResponse = inner_client.FileSystem.Append(beginAppendResponse.Location, ms);

Console.WriteLine("Data Appended");

return appendResponse;

}

The change for both of these methods is pretty simple, the BeginCreate and BeginAppend methods are no longer available and the new Create and Append methods now take in the path and Azure Data Lake Store account name.

With the changes applied the new methods are as follows:

private void execute_create(string path, MemoryStream ms)

{

inner_client.FileSystem.Create(path, adls_account_name, ms, false);

Console.WriteLine("File Created");

}

private void execute_append(string path, MemoryStream ms)

{

inner_client.FileSystem.Append(path, ms, adls_account_name);

Console.WriteLine("Data Appended");

}

Conclusion

As you can see it was not difficult to upgrade to the new version of the sdk. Unfortunately, since these are all preview bits changes like this can happen, hopefully this sdk has found its new home and it won’t go through too many more breaking changes for the end user.

StackOverflow March 2016 Contributions

Below are links to questions I have contributed to on StackOverflow. If you have time or any of the questions interest you take a moment to review the question and answers. If you feel one of the answers appropriately answer the question, then please vote that answer up to better serve the community and future users looking for an answer to a similar question.

- Power BI dynamic filtering according to user account

- how to connect to Power BI API using non-interactive authentication?

- How can you fetch data from an http rest endpoint as an input for an Azure data factory?

- Can’t find firewall settings for SQL Server

- Is Azure Data Factory suitable for downloading data from non-Azure REST APIs?

- Azure Data Factory – Bulk Import from Blob to Azure SQL

- Azure Data Factory – Multiple activities in Pipeline execution order

- Aligning columns in MVC4 Index.cshtml

- Error trying to move data from Azure table to DataLake store with DataFactory

Get All Users from JIRA REST API with C#

Introduction

I have been doing a lot of work integrating with various systems, which leads to the need to utilize many varying api’s. One common data point I inevitably need to pull from the target system is a list of all users. I have recently been working with the JIRA REST API and unfortunately there is no single method to get a list of all users. In this post I will provide a simple example in C# utilizing the /rest/api/2/user/search method to gather the list of users.

Prerequisites

- You will need access to a JIRA instance with the REST API enabled

- Visual Studio. Visual Studio Community 2015

- Create a new console application

- Install Atlassian.SDK nuget package

Visual Studio

First create a simple user object to model the json data being returned from the api.

public class User

{

public bool Active { get; set; }

public string DisplayName { get; set; }

public string EmailAddress { get; set; }

public string Key { get; set; }

public string Locale { get; set; }

public string Name { get; set; }

public string Self { get; set; }

public string TimeZone { get; set; }

}

Next we create a simple wrapper around the jira client provided by the Atlassian SDK.

The Jira client has built in functions mostly for getting issues or projects from the api. Luckily it exposes the underlying rest client so you can execute any request you want against the jira api. In the GetAllUsers method I am making a request to user/search?username={item} while iterating through the alphabet. This request will search the username, name or email address of the user object in jira. Since a username will likely contain more than one letter, as the results come back for each request there will be duplicates, so we have to check to make sure the user in the result set is not already in our list. Clearly this is not going to be the most performant method, however there is no other way to gather a full list of all users. Finally, we can create the jira helper wrapper and invoke the GetAllUsers method.

class Program

{

static void Main(string[] args)

{

var helper = new JiraApiHelper();

var users = helper.GetAllUsers();

}

}

Conclusion

As I stated above this solution is not going to be performant, especially if the Jira instance has a large number of users. However, if the need is to get the entire universe of users for the Jira instance then this is one approach that accomplishes that goal.

Azure Data Factory Pipeline Not Running (Pending Execution)

When starting out learning about Azure Data Factory, the first example usually completed is a basic copy activity. A great tutorial on moving data from an azure blob to an azure sql table can be found here. I think one of the key pieces of the data movement tutorial that gets missed is setting the external property on the input blob json definition to true. Setting this to true lets azure data factory know that the data is being externally generated and not from another pipeline in the factory. When I began trying my hand at creating pipelines I completely missed this note and was scratching my head wondering why the pipeline would never execute. With that being said I wanted to just walk through a few screens from the azure portal that are very helpful when trying to understand what is happening with your pipeline.

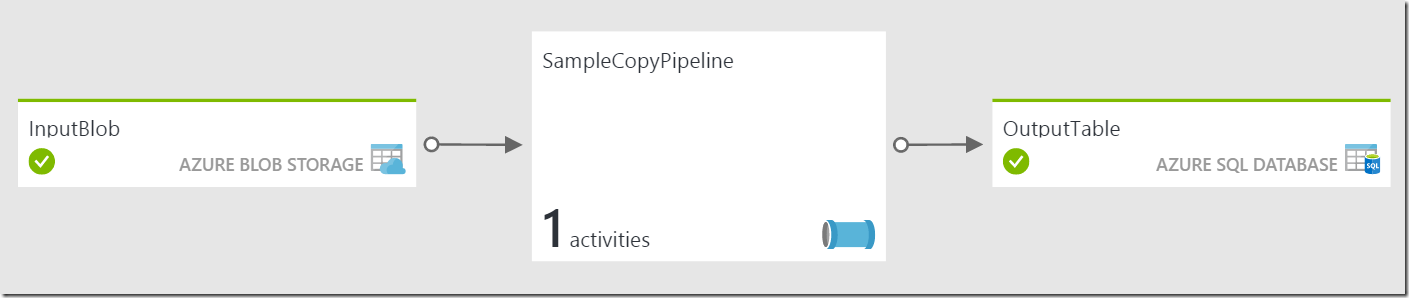

Suppose we have a simple copy example as follows:

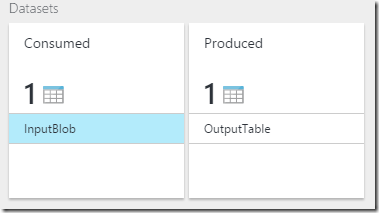

Looking at the SampleCopyPipeline blade you will see both datasets:

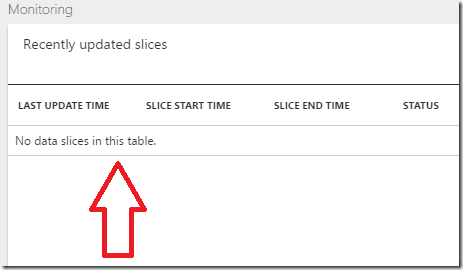

Selecting the InputBlob, to open that blade you will notice the first problem. In the monitoring section of the InputBlob, there are no slices ready to be worked on. The output of the copy activity is directly the dependent on there being an input that needs to be processed.

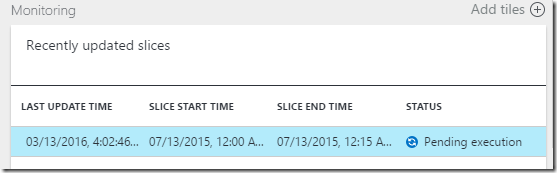

Now selecting the OutputTable, to open that blade you will see the common Pending Execution status for the output slice.

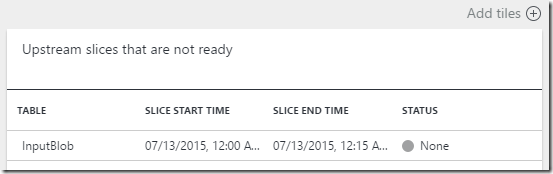

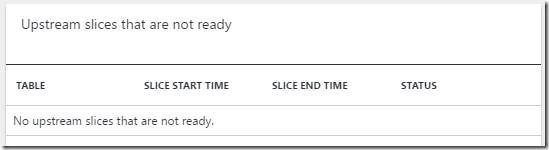

As I mentioned before the output slice is directly dependent on the input slice being ready for processing, in order for this slice to begin processing the corresponding input slice needs to be ready. We can see the culprit once we select the output slice to open the output slice blade, which shows the dependent upstream slices it is waiting for.

The input slice is there, however the status is None. Now the solution as I stated above is to set the external property of the input blob to true. After that is complete we can now see that the input blob blade shows a slice Ready for processing.

We can also see the corresponding output slice blade no longer shows it is waiting on the input slice.

Conclusion

When first learning about Azure Data Factory, it may be difficult to follow exactly what is happening with the pipeline. From the azure portal and the new Azure Data Factory Monitoring App you can get a lot of information about the status of your pipeline. Hopefully this example gave you a simple way to start using the portal to better understand what is happening and troubleshoot any possible issues.

Starting an Azure Data Factory Pipeline from C# .Net

Introduction

Azure Data Factory (ADF) does an amazing job orchestrating data movement and transformation activities between cloud sources with ease. Sometimes you may also need to reach into your on-premises systems to gather data, which is also possible with ADF through data management gateways. However, you may run into a situation where you already have local processes running or you cannot run a specific process in the cloud, but you still want to have a ADF pipeline dependent on the data being processed locally. For example you may have an ETL process that begins with a locally run process that stores data in Azure Data Lake. Once that process is completed you want the ADF pipeline to being processing that data and any other activities or pipelines to follow. The key is starting the ADF pipeline only after the local process has completed. This post will highlight how to accomplish this through the use of the Data Factory Management API.

Prerequisites

- You will need an Azure Subscription. Free Trial

- Visual Studio. Visual Studio Community 2015

- A Data Factory you want to manually start from .Net

Continue reading “Starting an Azure Data Factory Pipeline from C# .Net”

Resolving InvalidTemplate Error Running Azure Logic App Manually (Run Now)

Problem

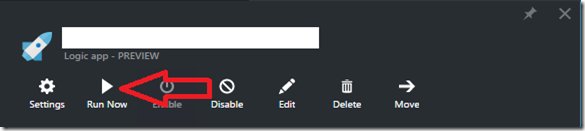

Recently I have been testing out Azure Logic Apps as a possible tool for enterprise application data synchronization. The first example I spiked out was to pull newly entered data from a table in an Azure SQL Database and push it into a Salesforce object. This is a pretty common example and extremely easy to implement with an Azure Logic App. My scenario included a Microsoft SQL Connector to my Azure SQL Database with a polling query, set to execute every hour. I added a Salesforce Connector mapping the database fields to the custom object defined in Salesforce. Very basic logic app, with a sql trigger and create action on the Salesforce Connector. The next step was to test this out to see if it worked, I could have waited the hour execution interval, but I noticed a Run Now button on the main logic app blade.

Every time I clicked the Run Now button for the logic app I received the following error:

{"code":"InvalidTemplate","message":"Unable to process template language expressions in action 'salesforceconnector' inputs at line '1' and column '11': 'Template language expression cannot be evaluated: the property 'outputs' cannot be selected.'."}

Solution

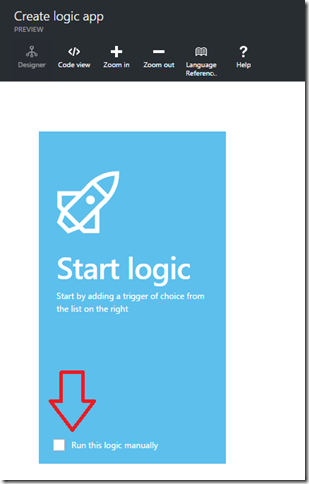

The solution here is pretty simple, when you have a logic app with a trigger defined, DO NOT click the Run Now button. If you would like to run the logic app manually you will notice the first tile that comes up has a check box to do just that.

I never even noticed this option until I ran into this issue. Use this option if you need manual control over the logic app execution.

Note: As of this writing I do know the logic app designer is getting a major overhaul in the coming weeks. I will do my best to update this post once those new bits become available. Watch this to see a demo of what is coming.

Accessing Azure Data Lake Store from an Azure Data Factory Custom .Net Activity

04/05/2016 Update: If you are looking to use the latest version of the Azure Data Lake Store SDK (Microsoft.Azure.Management.DataLake.Store 0.10.1-preview) please see my post Upgrading to Microsoft.Azure.Management.DataLake.Store 0.10.1-preview to Access Azure Data Lake Store Using C# for what needs to be done to update the DataLakeHelper class.

Introduction

When working with Azure Data Factory (ADF), having the ability to take advantage of Custom .Net Activities greatly expands the ADF use case. One particular example where a Custom .Net Activity is necessary would be when you need to pull data from an API on a regular basis. For example you may want to pull sales leads from the Salesforce API on a daily basis or possibly some search query against the Twitter API every hour. Instead of having a console application scheduled on some VM or local machine, this can be accomplished with ADF and a Custom .Net Activity.

With the data extraction portion complete the next question is where would the raw data land for continued processing? Azure Data Lake Store of course! Utilizing the Azure Data Lake Store (ADLS) SDK, we can land the raw data into ADLS allowing for continued processing down the pipeline. This post will focus on an end to end solution doing just that, using Azure Data Factory and a Custom .Net Activity to pull data from the Salesforce API then landing it into ADLS for further processing. The end to end solution will run inside a Custom .Net Activity but the steps here to connect to ADLS from .net are universal and can be used for any .net application.

Prerequisites

- You will need an Azure Subscription. Free Trial

- Visual Studio. Visual Studio Community 2015

- (ADLS)Azure Data Lake Store

Continue reading “Accessing Azure Data Lake Store from an Azure Data Factory Custom .Net Activity”