When starting out learning about Azure Data Factory, the first example usually completed is a basic copy activity. A great tutorial on moving data from an azure blob to an azure sql table can be found here. I think one of the key pieces of the data movement tutorial that gets missed is setting the external property on the input blob json definition to true. Setting this to true lets azure data factory know that the data is being externally generated and not from another pipeline in the factory. When I began trying my hand at creating pipelines I completely missed this note and was scratching my head wondering why the pipeline would never execute. With that being said I wanted to just walk through a few screens from the azure portal that are very helpful when trying to understand what is happening with your pipeline.

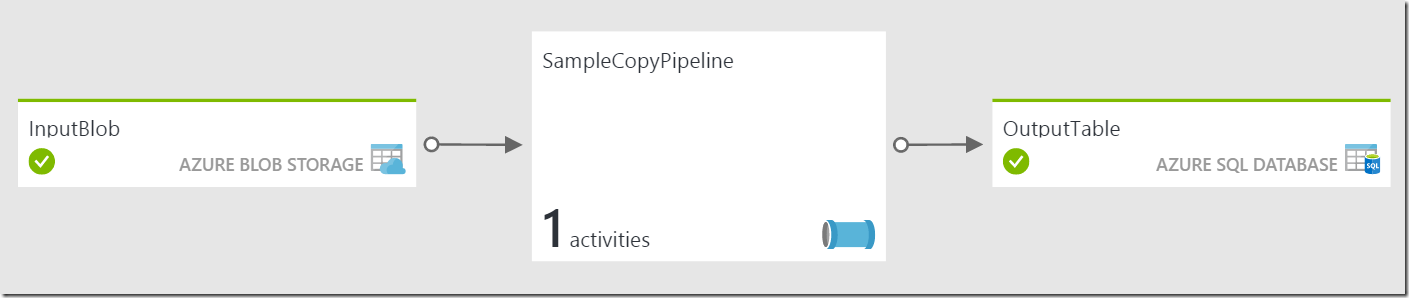

Suppose we have a simple copy example as follows:

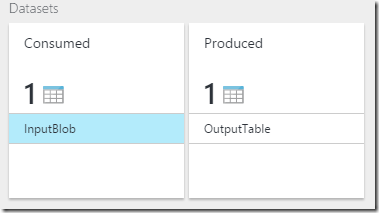

Looking at the SampleCopyPipeline blade you will see both datasets:

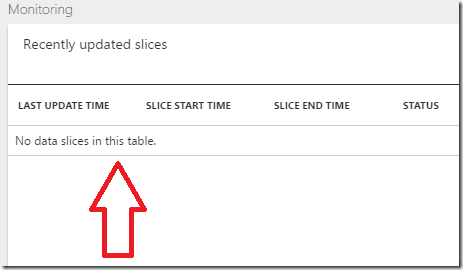

Selecting the InputBlob, to open that blade you will notice the first problem. In the monitoring section of the InputBlob, there are no slices ready to be worked on. The output of the copy activity is directly the dependent on there being an input that needs to be processed.

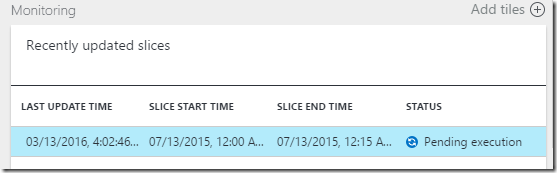

Now selecting the OutputTable, to open that blade you will see the common Pending Execution status for the output slice.

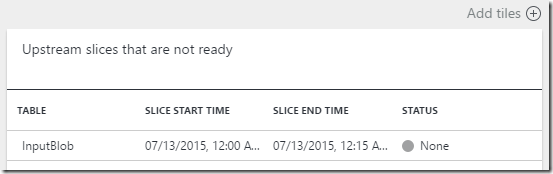

As I mentioned before the output slice is directly dependent on the input slice being ready for processing, in order for this slice to begin processing the corresponding input slice needs to be ready. We can see the culprit once we select the output slice to open the output slice blade, which shows the dependent upstream slices it is waiting for.

The input slice is there, however the status is None. Now the solution as I stated above is to set the external property of the input blob to true. After that is complete we can now see that the input blob blade shows a slice Ready for processing.

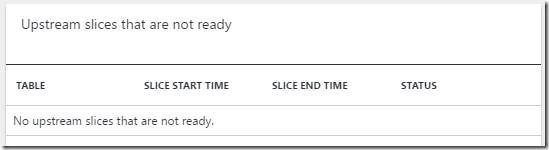

We can also see the corresponding output slice blade no longer shows it is waiting on the input slice.

Conclusion

When first learning about Azure Data Factory, it may be difficult to follow exactly what is happening with the pipeline. From the azure portal and the new Azure Data Factory Monitoring App you can get a lot of information about the status of your pipeline. Hopefully this example gave you a simple way to start using the portal to better understand what is happening and troubleshoot any possible issues.